Sign Language Interfaces: Why Wearables are the Final Frontier for SL Equity

As a research team dedicated to the intersection of accessibility and human-computer interaction, we have long viewed the digital world through a specific lens: the "linguistic wall." For the roughly 17.5 million primary sign language users globally, the internet has historically been a text-centric landscape that fails to recognize the spatial, visual, and multi-layered nature of their native languages.

Today, we want to share how our perspective has evolved from identifying these systemic barriers to witnessing a technological shift that is finally making "social presence" a reality for the signing community.

The Foundation: A Roadmap for Change

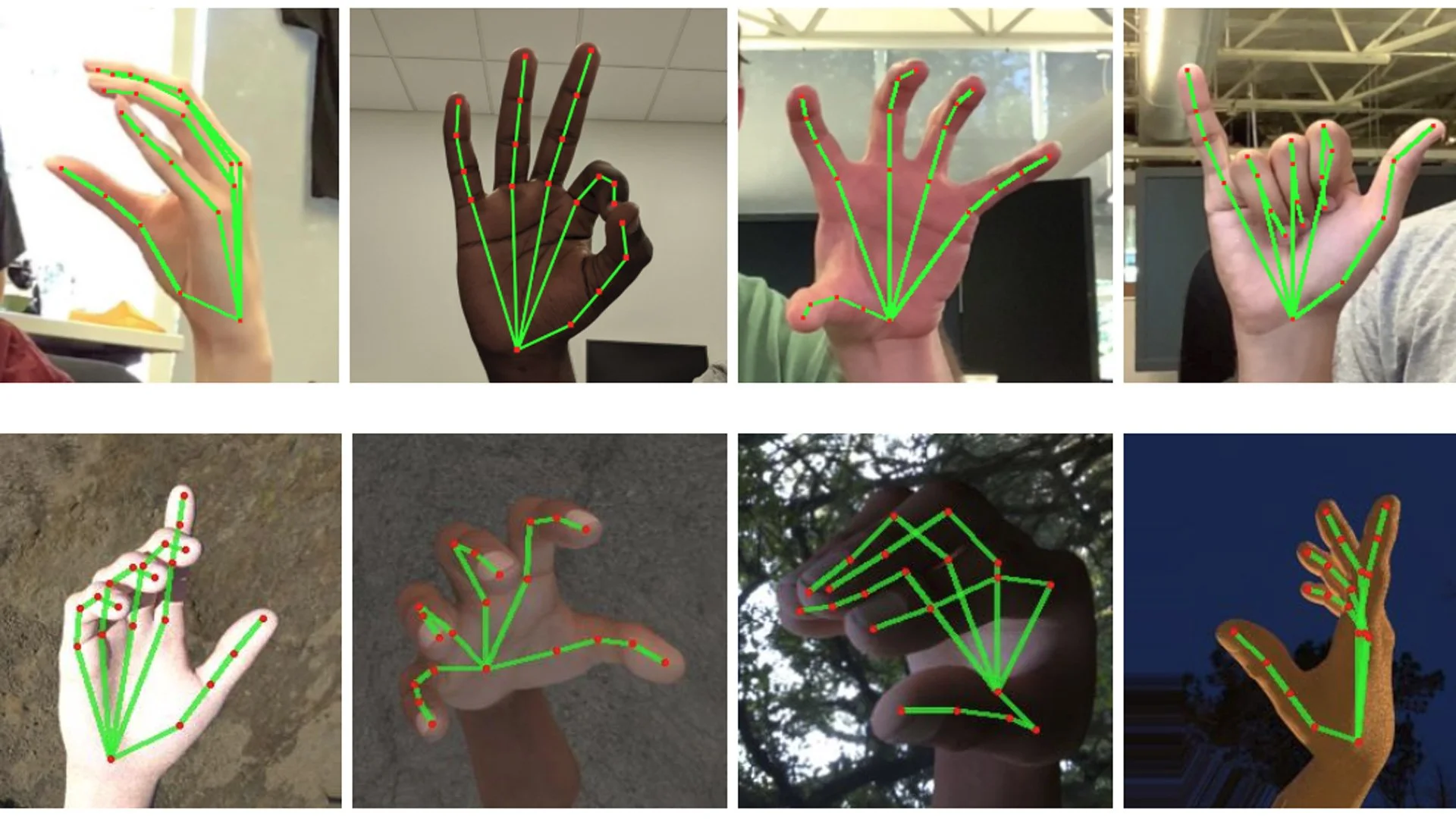

Our journey began with a critical assessment of why technology was leaving signers behind. In recent research paper, Sign Language Interfaces: Discussing the Field's Biggest Challenges, we outlined a clear roadmap for the community. We identified that the lack of standardized "written" forms for sign language, combined with a lack of diverse, representative datasets, created a massive bottleneck for AI development.

We called for five specific actions, ranging from deep community partnerships to the creation of new UI guidelines that move beyond the mouse and keyboard.

For years, the technical constraints of computer vision and the "phone-in-face" nature of translation apps meant that technology often felt like a barrier to natural conversation rather than a bridge. But as we look at the landscape in 2026, the arrival of high-fidelity wearable devices is fundamentally changing the physics of this problem.

The Wearable Revolution: Head-Up and Hands-Free

The transition from handheld screens to "head-up" computing is the breakthrough we have been waiting for. As Maxine Williams, Meta’s VP of Accessibility and Engagement, recently emphasized at CES 2026, the goal of modern inclusive design is to shift the burden of adaptation away from the individual and onto the device.

This is where the "curb-cutting" effect comes into play. Just as sidewalk ramps were designed for wheelchairs but benefit everyone from parents with strollers to travelers with luggage, the latest wearable features—like the Meta Ray-Ban Display offer profound "curb-cuts" for the Deaf community.

The Preservation of Eye Contact: In sign language, eye contact and facial expressions are as vital as hand movements. By moving information—like real-time captions or a discreet teleprompter—directly onto a lens, signers can stay engaged with their conversation partner instead of looking down at a phone.

Conversation Focus: New AI-driven audio and visual features allow users to isolate the person they are looking at, filtering out background noise or visual clutter. This creates a focused channel for communication that mirrors the natural way signers interact in busy environments.

The Neural Bridge: Perhaps most exciting is the integration of the Meta Neural Band. By using surface electromyography (sEMG), we can now detect the subtle muscle signals in the wrist. This allows for "invisible" gestures and EMG-based handwriting, addressing one of the core challenges we noted in our CHI 2020 paper: the need for efficient, spatial ways to "write" and input data without a physical keyboard.

From Access to Fluency

Our team believes that we are moving out of the era of "Special Requirements" and into the era of "Native Capability." When we design for the most extreme communication needs, we end up with more expressive and intuitive tools for everyone.

A wearable that understands the spatial movements of a signer is a wearable that understands the world more deeply. By integrating haptic feedback, real-time visual translations, and neural-input controls, we are no longer just providing "access" to a text-based world. We are building a digital ecosystem that finally speaks the language of its users.

The future of sign language interfaces isn't found in a translation app on a smartphone; it is found in the glasses we wear and the way we move our hands in the space between us. We are finally building a world where the linguistic wall is a thing of the past.